Direct Market Access

at Venomous Speed

Fully managed, institutional-grade DMA for futures HFT - ultra-low-latency software, dedicated CME colocation, and exchange connectivity in one flat-rate subscription.

Everything You Need to Trade at Institutional Speed

Without the institutional price tag, the 18-month build cycle, or the engineering headcount.

{{ c.body }}

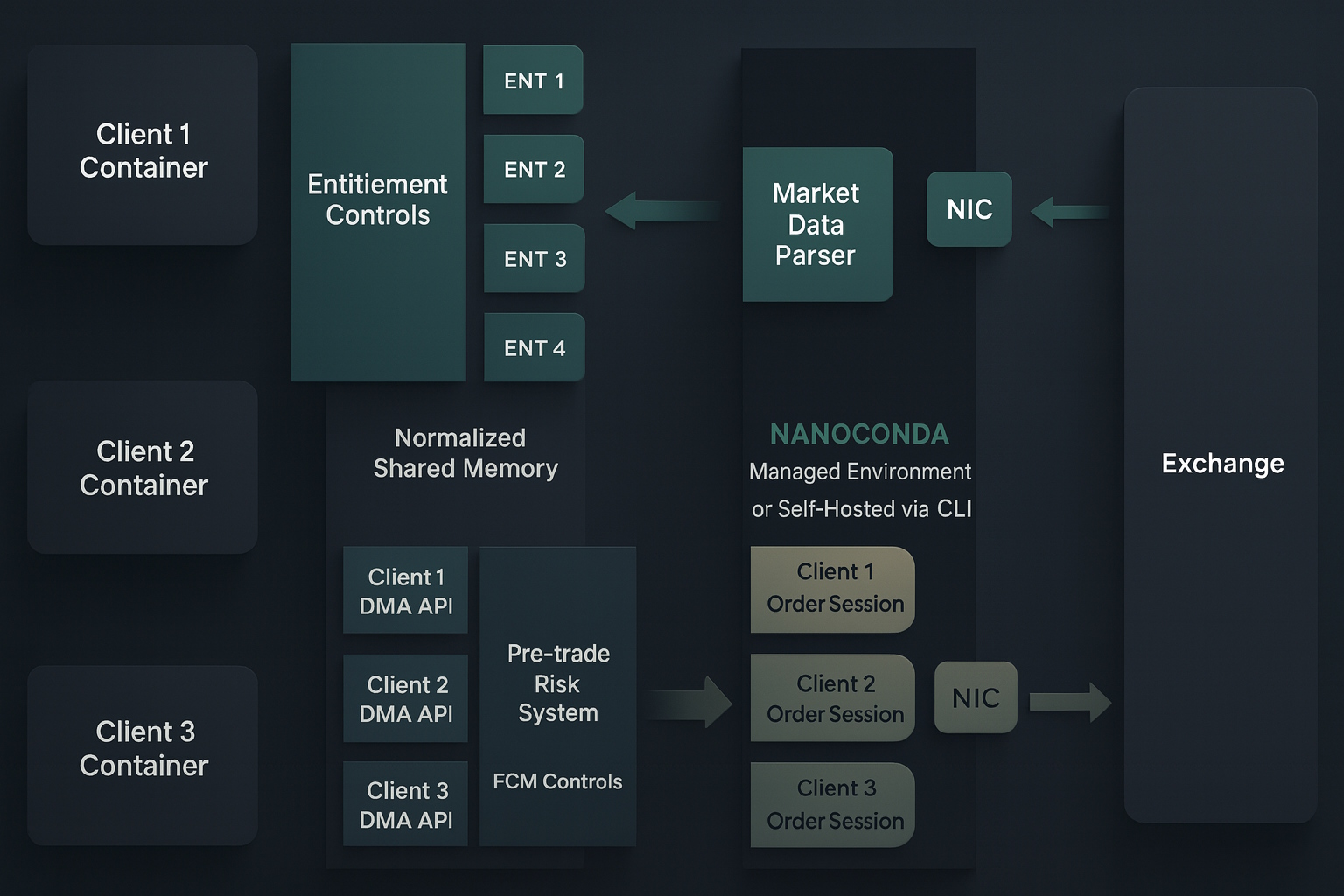

Everything in One Integrated Stack

From market data to order execution - no hidden complexity, no extra infrastructure.

{{ features[activeFeature].title }}

{{ features[activeFeature].body }}

- {{ pt }}

The GUI Your Traders Will Use

Real-time order management, live P&L, depth of market, and one-click kill switch - all reading directly from shared memory.

Available as a browser app and native desktop clients for Windows, Linux, and macOS.

Go Live in Three Steps

A clear path from development to live trading - with support at every stage.

{{ s.body }}

We Handle the Infrastructure.

You Focus on Alpha.

Building and certifying a CME gateway in-house takes 12–18 months and $200K–$1M. Nanoconda delivers the same institutional-grade infrastructure - software, hardware, colocation, and certification - for one flat monthly rate.

Your algorithm runs at the exchange, in-memory risk runs on the same machine, and our team operates it 24/6. You redirect every engineering hour toward your strategy.

Talk to usNanoconda vs. The Alternatives

Wherever you are starting, Nanoconda provides a direct path to efficient, low-latency execution.

Traditional broker APIs and FCM FIX gateways introduce abstraction layers between your strategy and CME - limiting control, restricting order types, conflating data, and adding latency at each hop.

- ✓~1µs order-to-wire - not milliseconds through a broker

- ✓Full order-by-order book data (MBO)

- ✓Every exchange-native order type, including FAK and Iceberg

- ✓Direct iLink 3.0 connectivity to CGW or MSGW, no intermediary

- ✓$0 per-order routing fee - flat monthly pricing

Developing a CME-certified gateway typically requires $200K–$1M and 12–18 months - and the gateway is only one component of the full trading stack.

- ✓Go live in days - not 12–18 months

- ✓Colocation infrastructure in Aurora, networking included

- ✓Real-time GUI - DOM, live P&L, Algo Controls, Kill switch and more

- ✓In-memory pre-trade risk integrated with your FCM

- ✓Fully managed 24/6 - hardware, OS, and CME certification updates

| Broker API | Other DMA Platform | DIY DMA | Nanoconda | |

|---|---|---|---|---|

| Performance | ||||

| {{ row.feature }} | ✓–{{ row.retail }} | ✓–{{ row.fix }} | ✓–{{ row.diy }} | ✓–{{ row.nano }} |

| Setup & Operations | ||||

| {{ row.feature }} | ✓–{{ row.retail }} | ✓–{{ row.fix }} | ✓–{{ row.diy }} | ✓–{{ row.nano }} |

| Cost | ||||

| {{ row.feature }} | ✓–{{ row.retail }} | ✓–{{ row.fix }} | ✓–{{ row.diy }} | ✓–{{ row.nano }} |

One Platform. Four Products.

Software licenses you run yourself, or fully managed services at CME colocation. Flat-rate - no order routing fees, no surprises.

- ✓ {{ f }}

Ready to trade at institutional speed?

Professional futures traders use Nanoconda to go from zero to live on CME in days. Let's talk.

Pick a time for a quick chat

We'll take a look at your setup, share practical ideas, and point you in the right direction. By the end, you'll walk away with a clear action plan - whether you use Nanoconda or not.